Last week I introduced SundaySky’s video generator and explained some of its performance characteristics. Today I’ll discuss how SmartVideo is driven by data, and the ways in which it adapts to data variation.

DATA INTELLIGENCE

Many SmartVideo use cases utilize data and content that customers already created for their web sites, such as product features or user reviews. Repurposing this data for video isn’t always trivial. For example, most web page text is too long to show on-screen or to narrate.

Consider a user review – how do we determine which phrase to show on-screen? Which image to show from five available images, so it will look great in the video and against the background? Which are the two most important features to show on-screen for a camera with ten key features? Dealing with these issues is collectively called data intelligence.

VIDEO THAT ADAPTS TO DATA

“Intelligence is the ability to adapt to change,” said Stephen Hawking. And indeed, what puts the “smart” in SmartVideo is its ability to adapt and adjust itself to data changes.

Effective SmartVideo delivers an engaging message by making the best use of state-of-the-art creative elements, driven by the available data. This means data may also effect structure, layout, timing and style.

Consider the following scenario for a SmartVideo scene showing five product features on-screen: Three features are highlighted in turn using some 3D animation. As each feature is highlighted, a relevant clip demonstrates the feature, and narration explains its benefits. Finally, the scene transitions to the next scene. What are some data variations that we may need to adapt to?

- If there are less than five features available, we adapt the layout and possibly also font sizes.

- The length of feature descriptions varies, which may also effect layout.

- The selection of highlighted features depends on their value, but also on whether a good enough clip exists for them.

- We may want to vary the highlight order, making sure everything still looks seamless.

- The duration of the narration is different for each feature. The highlight of a feature needs to start only after the previous highlight (including the narration) has ended. The same is true for the scene exit transition.

- What if we want to sync the highlight events to the beat of the music track?

- What if the entire scene is not applicable for certain products? Adapt!

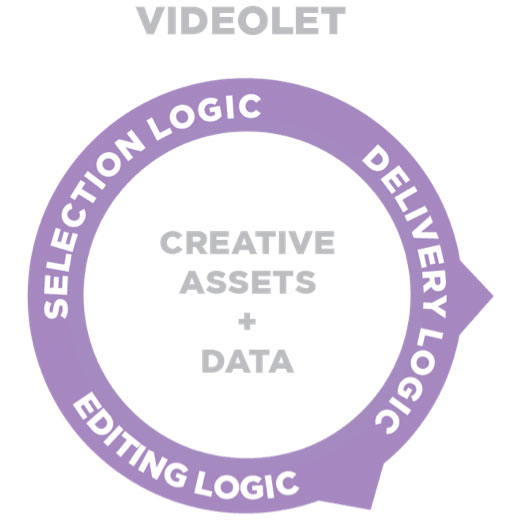

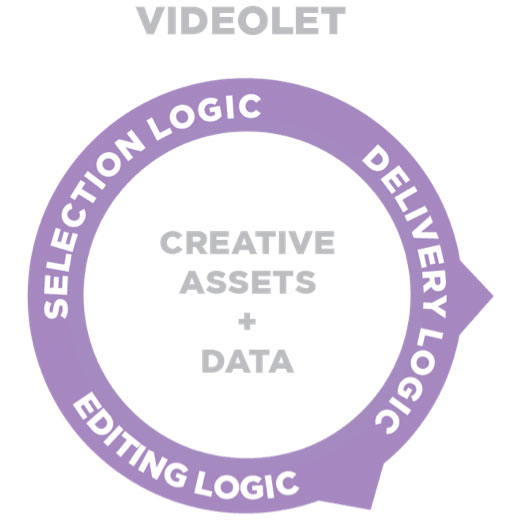

The Videolet, which defines how the video is generated from data input, needs to describe these dependencies and variations, and the video generator needs to be able to omit, delay, pace, stretch, and repeat video elements both in time and space accordingly.

At SundaySky, we developed a markup language called VSML (Video Scene Markup Language) to capture the semantics and dependencies of the video script in an object-oriented manner. We like to say that VSML consists of “director/scriptwriter instructions.” It can refer to entities in the scene (narrator, camera, animation, text, character or any custom object), instructing them to invoke their behaviors while synchronizing them in time and space. For our example, it can tell a highlight sequence to start after the previous sequence ended, and to construct the seqeuence from a narration, highlight animation, and feature clip, specifying how they should be synchronized.

INTENTIONAL VARIATION

When your customers view more than one SmartVideo, it’s important to introduce intentional variation into the video. After all, SmartVideo is all about customer engagement, so captivate them at each view. Variation can manifest in structure (which scenes to include), style (color, pace, music, transitions, voice, theme), or content (which data, text, images, animations and narrations to include). Variation also plays a central role in message sequencing and in automatic creative optimization (which will be the topic of a future post).

Between new customer use cases and our creative team’s constant innovation, it’s a joy for me to see what creative ideas get implemented on top of the smart technology, pushing the envelope on what we can do. These are exciting times for SmartVideo technologists like myself.